For most of the last decade and a half, software development has meant some version of the same loop: write code, hit an error, debug it, search for the cause, read the docs, and try again. That loop is not disappearing, but the tooling around it is changing fast. Based on official materials reviewed on March 19, 2026, the real shift is not just that AI can suggest code. It is that modern coding agents can work inside a repository, use tools, modify files, run commands, and hand back work for review.

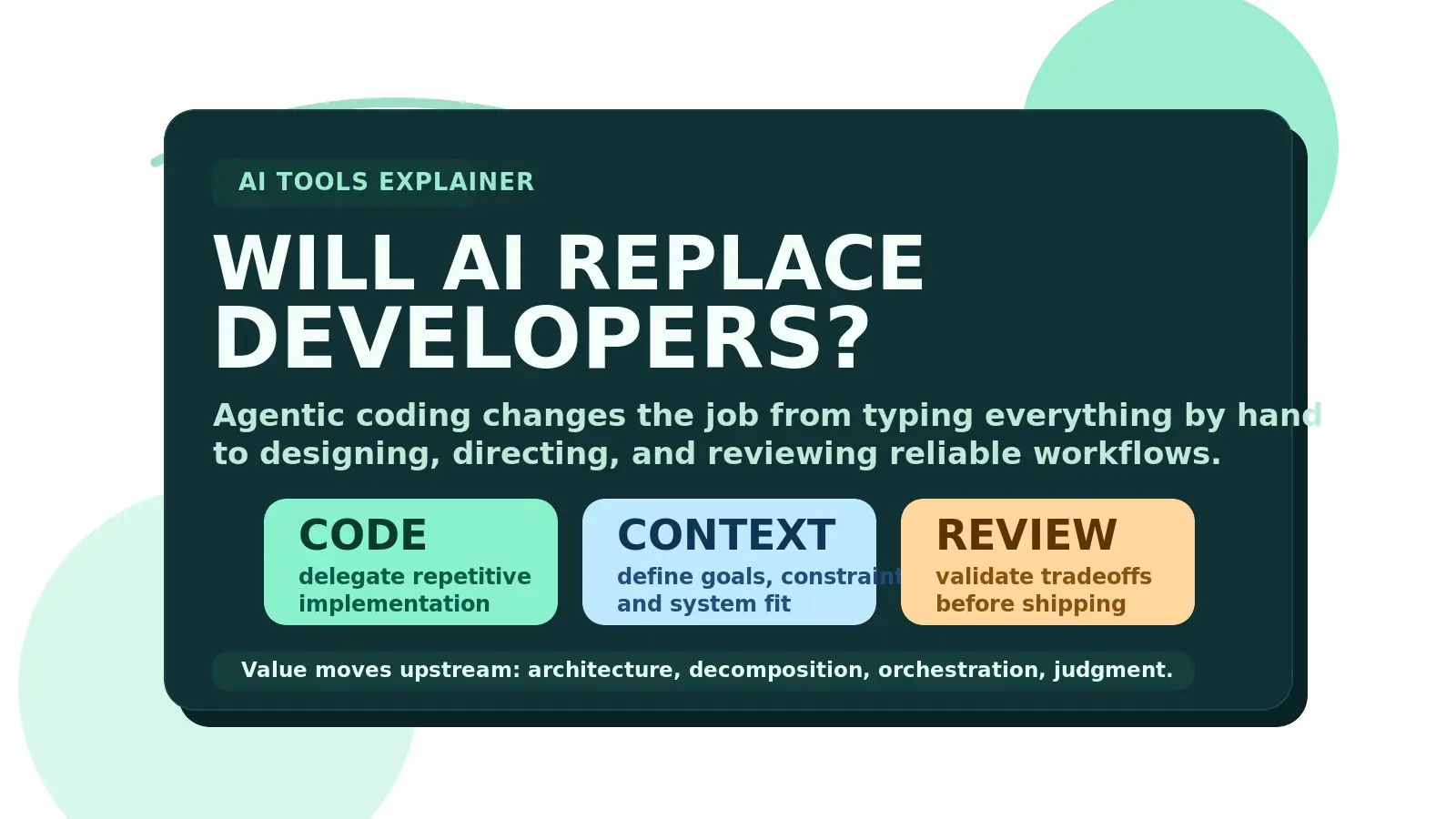

That is why the better question is no longer "Can AI write code?" It clearly can. The more useful question is whether AI replaces developers or changes what good developers spend their time on. The short answer is this: AI is more likely to move developers up the stack than remove them from the stack. Repetitive implementation work gets easier to delegate, while architecture, decomposition, context design, review, and judgment become more valuable.

What Is Different About Agentic Coding?

The cleanest way to understand the change is to separate three modes of AI-assisted development:

| Mode | Typical scope | What it does best | Where the developer still creates leverage |

|---|---|---|---|

| Chat assistant | One question or snippet at a time | Explain code, draft functions, answer conceptual questions | Ask better questions and validate the answer |

| IDE assistant | Inline editing near the cursor | Autocomplete, refactors, local edits, quick fixes | Accept, reject, and steer code faster |

| Coding agent | Multi-step work inside a project | Read files, edit code, run commands, use tools, and iterate on a task | Define the goal, constraints, context, and review standard |

That last row is the important one. OpenAI says Codex can read and edit files, run commands, and iteratively run tests inside isolated environments. GitHub says Copilot coding agent can work in the background, open pull requests, use custom instructions, and connect to MCP servers, hooks, skills, and memory. Google says Gemini Code Assist agent mode can use built-in tools and MCP servers for multi-step tasks in the IDE. Anthropic documents Claude Code around shared settings, CLAUDE.md, permissions, and MCP-based extensions.

That is not a small product improvement over autocomplete. It is a different operating model.

Why The Developer Role Shifts Upstream

Once the agent can read files, edit files, run tests, and propose changes, the bottleneck stops being pure typing speed.

The higher-value work moves upstream:

- defining the real problem instead of the first visible symptom

- breaking large work into bounded tasks an agent can complete safely

- deciding which context belongs in tests, docs,

AGENTS.md,CLAUDE.md, or custom instructions - setting guardrails for security, review, and deployment

- reviewing tradeoffs instead of only reviewing syntax

In other words, the role shifts from pure coder toward designer, decomposer, and conductor.

That does not mean strong implementation skills stop mattering. It means they matter in a different way. The best developers will still understand the code deeply, but they will spend less time manually reproducing predictable work and more time shaping the system the work happens inside.

Why AI Still Does Not Replace Developers

The strongest argument against the "developers are finished" narrative is in the tools' own documentation.

These systems perform better when the environment is prepared well. OpenAI explicitly says Codex works best when it gets a configured development environment, reliable tests, and clear documentation. GitHub emphasizes custom instructions, memory, hooks, skills, and MCP servers to improve repository understanding. Anthropic exposes project-scoped settings, CLAUDE.md, subagents, and MCP servers because context has to be structured somewhere. Google tells users to provide detailed prompts, configure tools, and approve plans and actions during execution.

That all points to the same reality: AI can execute, but it still depends on humans to define what "good" means.

Complex production systems are full of ambiguity:

- product requirements conflict

- legacy constraints are undocumented

- tests are incomplete

- "works" and "safe to ship" are not the same thing

- local fixes can create architectural debt

That is where human judgment stays central. GitHub's docs still frame the outcome around human review and merge control, and OpenAI explicitly says users should manually review and validate agent-generated code before integration. The more capable the agent becomes, the more important that review layer gets, not less.

The Skills That Matter More Now

Prompting matters, but it is not the deepest skill.

In an agentic workflow, the developers who win are usually better at these five things:

- Problem decomposition: turning vague goals into well-scoped tasks that can be delegated safely

- Architecture: knowing where a change belongs and what it should not break

- Context design: externalizing project knowledge into tests, instructions, memory files, and tool integrations

- Evaluation: deciding which checks prove the result is actually correct

- Workflow orchestration: choosing when to pair directly, when to hand off a background task, and when to split work across multiple agents

This is why the hype around the "100x developer" label is both right and wrong.

It is wrong if you interpret it as one person magically becoming infallible because a model writes code quickly. It is right if you interpret it as a developer with clear architecture, strong decomposition, and disciplined review being able to move much faster than before.

The Real Career Takeaway

Web, mobile, and cloud did not remove the need for developers. They changed what valuable developers focused on.

AI is likely to do the same. The opportunity is not to compete with the model at line-by-line output. The opportunity is to learn how to design the workflow around the model: what to delegate, what to review, what to automate, what to protect, and where human judgment still has to stay in the loop.

So no, AI does not look like it will simply replace developers. The more realistic outcome is sharper than that: it replaces a growing share of rote coding labor, and rewards developers who can turn AI into a reliable engineering system.