The first time I used OpenClaw, my reaction was simple: this thing is good.

It was fast, capable, and surprisingly useful once the automation started clicking. In the right setup, it feels less like a chatbot and more like a real operator that can keep work moving.

Then the bill started climbing.

At first I wrote it off. If a tool saves real time, some spend is expected. But after a while the pattern got uncomfortable. I was not doing anything obviously extreme, yet the cost kept stacking up in the background.

That is the trap with OpenClaw. If you use it casually, it is easy to burn money without feeling the leak. But if you understand how the costs are created, the problem becomes much more manageable.

When I reviewed OpenClaw's official docs on March 22, 2026, the core mechanics were clear:

- context includes much more than your latest message

/compactsummarizes older history instead of dragging the full session forever/new,/reset,/status, and/modelare the practical control points- Heartbeat can run on its own cadence, model, and context rules

- Ollama gives OpenClaw a local-model path with zero configured model cost

That matched what I had already felt in actual use. Once I started managing those levers deliberately, my cost dropped by roughly 80-90% without a dramatic quality loss.

Where the money actually goes

The easiest way to understand the bill is to stop thinking only about prompts and start thinking about what OpenClaw is sending on every run.

| Cost leak | Why it gets expensive | What changed the most for me |

|---|---|---|

| Context bloat | Old messages, tool output, system prompt, and attachments keep riding along | Check /status, inspect /context list, use /compact, reset when the topic changes |

| One premium model for every task | Cheap work gets routed through the same expensive reasoning model | Split models by task and switch with /model or per-agent defaults |

| Background Heartbeat turns | Scheduled checks still consume agent turns and tokens | Lower the frequency, limit active hours, enable isolatedSession, enable lightContext, use a cheaper or local model |

That is the whole article in one table.

The rest is just execution discipline.

1. The first thing to fix is the context window

Most people underestimate this.

OpenClaw's context docs are explicit that "context" is everything sent to the model for a run, not just the one line you typed. In practice that means the bill is shaped by:

- the system prompt OpenClaw builds

- the conversation history for that session

- tool calls and tool results

- attachments such as files, images, or audio

That is why a short message can still be expensive. The visible prompt may be tiny while the actual payload is large.

My first habit now is checking /status whenever a session starts feeling long. If I want more detail, I use /context list. Those two commands are enough to make cost visible again.

Once you start doing that regularly, the pattern becomes obvious. Sessions do not usually become expensive because of one brilliant prompt. They become expensive because you kept carrying old history far past the point where it was still useful.

The simplest rule I follow now is this: if the topic changes, I stop trying to preserve the old session.

OpenClaw's compaction docs explicitly note that if you need a fresh slate, /new or /reset starts a new session id. That matters more than people think. A clean session is often the cheapest optimization available.

2. Do not reset everything when /compact is enough

That said, a full reset is not always the right answer.

Sometimes you do need continuity. Maybe the broad problem is the same, but you no longer need every back-and-forth from the past hour. That is where /compact does real work.

According to OpenClaw's compaction docs, compaction summarizes older conversation into a compact summary entry while keeping the recent messages intact. In plain English, it preserves the useful shape of the conversation without making you keep paying for the entire transcript.

This became one of my default habits in longer sessions.

I use it when:

- the task is still the same

- the recent context still matters

- the session feels bloated

- I do not want to lose the current working thread

If the topic has changed, I use /new or /reset.

If the topic is the same but the session is getting heavy, I use /compact.

That one distinction saves a lot of waste.

3. I stopped using one expensive model for everything

The second big savings came from model strategy.

Many people make the same mistake at the start: they find the best model they have access to, set it as the default, and leave it there for everything.

That feels efficient, but it usually is not.

OpenClaw's model docs make the product direction clear. The recommended policy is to keep a strong primary model, but use fallbacks for cost-sensitive or lower-stakes work. OpenClaw also supports per-agent model overrides, and /model lets you switch models directly in chat.

That means there is no real reason to send every task through the same expensive model.

In practice, I split work like this:

- cheap model for summaries, rewrites, first drafts, and repetitive cleanup

- stronger model for high-stakes reasoning, harder coding, and decisions where quality really matters

- specialized model when a task clearly benefits from a specific provider or capability

The important mindset shift is this:

the goal is not maximum model quality on every turn; the goal is enough model quality for the task in front of you

That change alone often removes a lot of waste without the user feeling much difference at all.

4. The hidden bill is Heartbeat

The last major leak took me the longest to notice.

Heartbeat sounds harmless because it is usually doing small work: checking whether something needs attention, scanning for a follow-up, or returning HEARTBEAT_OK when nothing matters.

But OpenClaw's Heartbeat docs are direct about the mechanics. Heartbeats are still full agent turns. The default cadence is typically 30m, or 1h for some Anthropic authentication setups, and 0m disables them.

That means a short interval can quietly burn tokens all day even when you are not actively chatting.

The first fix is cadence.

If something does not need to run every 30 minutes, do not let it run every 30 minutes. Stretch it to an hour, longer, or turn it off entirely.

The second fix is schedule.

Heartbeat supports activeHours, which means you can stop pretending your agent needs to wake up at every hour of the day. If the checks only matter during work hours, constrain them to work hours.

Those two changes matter immediately, but the most important Heartbeat settings are the context controls.

OpenClaw's cost notes recommend:

isolatedSession: trueso heartbeats do not keep carrying full conversation historylightContext: trueso heartbeat runs can stay focused onHEARTBEAT.md- a cheaper

modeloverride for low-stakes background work

The docs are especially clear on isolatedSession: true: it can cut a heartbeat run from roughly a full-history turn to something closer to a small lightweight run. That is exactly the kind of silent waste most people never notice until after the bill arrives.

5. Heartbeat does not need a premium model

This was the most practical realization of the whole setup.

Heartbeat tasks are usually not hard.

They are things like:

- is there anything urgent to check?

- should I send a reminder?

- should I scan for a follow-up?

Those are not premium-reasoning jobs.

OpenClaw supports a separate Heartbeat model override, which means your main workflow can stay on a strong model while Heartbeat runs on something cheaper. That is the cleanest setup for most people.

And if you want to push costs down even harder, OpenClaw's Ollama docs make the local route straightforward. You can install Ollama, pull a local model, and point Heartbeat at it. OpenClaw's docs also note that local Ollama models are assigned $0 cost in the model catalog.

That is why Ollama is such a strong fit here. Heartbeat is repetitive, lightweight, and usually does not need top-tier reasoning. Local is often good enough.

This is the shape of the config I would use as a starting point:

{

agents: {

defaults: {

heartbeat: {

every: "1h",

activeHours: { start: "08:00", end: "22:00" },

isolatedSession: true,

lightContext: true,

model: "ollama/llama3.2:1b",

},

},

},

}

The exact model should match your machine and tolerance for local-model quality. The point is not the specific model name. The point is separating "background maintenance" from "main reasoning work."

6. My default OpenClaw cost playbook now

After a lot of trial and error, this is the setup that feels sane:

- If a session gets long, check

/status. - If I need to see what is inflating it, check

/context list. - If I still need continuity, run

/compact. - If the topic changed, stop being sentimental and use

/newor/reset. - Route low-stakes work to cheaper models.

- Keep stronger models for the turns where quality actually matters.

- Treat Heartbeat as real spend, not invisible automation.

- Slow Heartbeat down, confine it to active hours, and keep it on a cheap or local model.

That is the whole system.

The point is not to avoid good models. The point is to stop wasting good models on the wrong work.

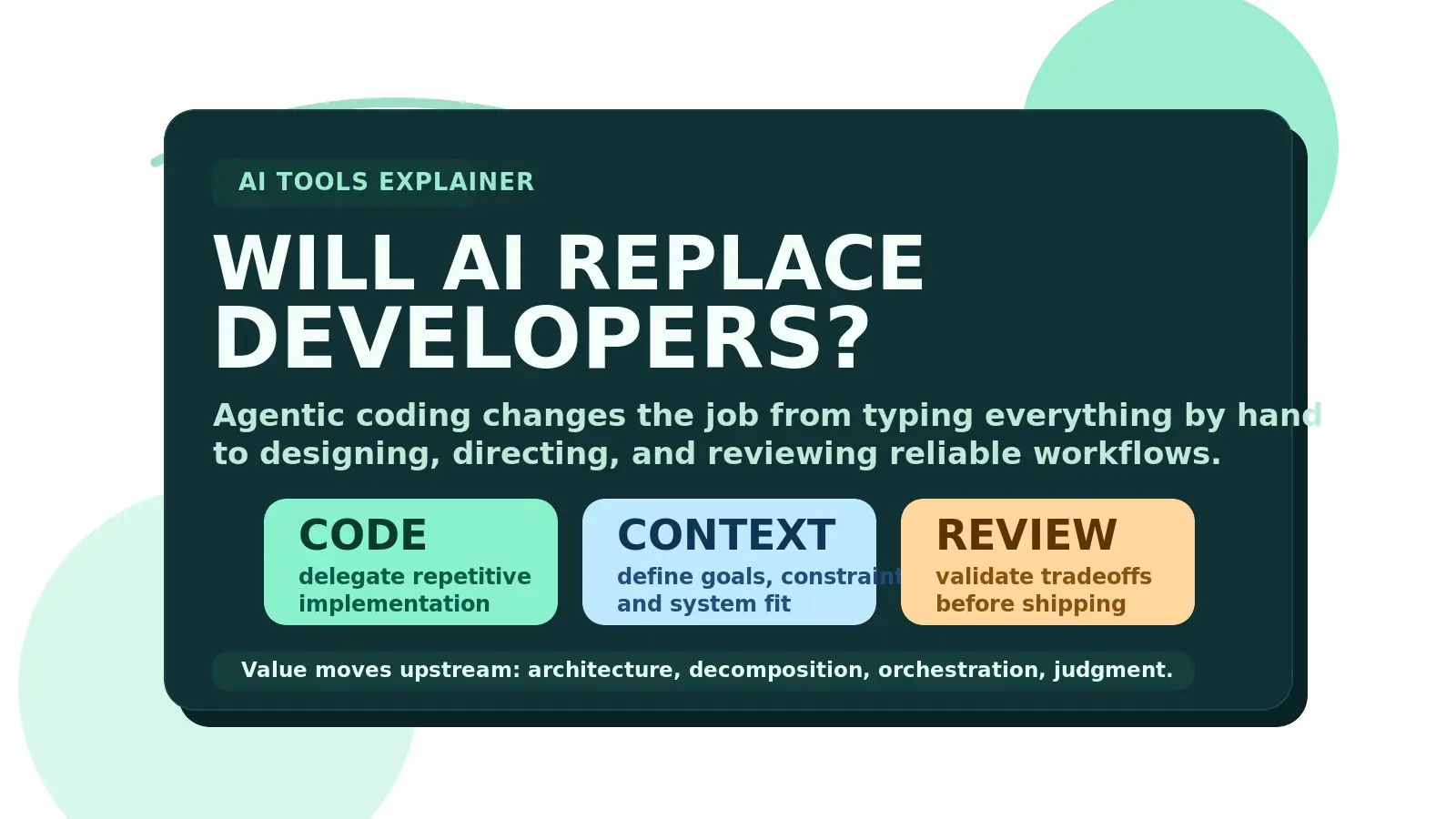

The real lesson

OpenClaw is not expensive only because powerful models cost money.

It gets expensive because people usually make three structural mistakes at once:

- they let sessions grow until context becomes bloated

- they use one premium model for everything

- they let Heartbeat run like free background magic when it is actually paid agent work

Once I fixed those three things, the spending pattern changed immediately.

The quality did not collapse. The workflow did not become annoying. The product did not suddenly become weaker.

I just stopped paying premium prices for session history, low-stakes turns, and background checks that never needed premium treatment in the first place.

That is why I think the right framing is not "use OpenClaw less."

The right framing is "understand what creates the bill, then use OpenClaw correctly."

That difference is much bigger than it sounds.

If you are comparing more AI workflow patterns beyond this one, the AI Tools hub and AI automation for solopreneurs are the next two places I would start.